Introduction

In the rapidly evolving field of artificial intelligence (AI), ensuring the reliability and robustness of language models is paramount. These models, which power a wide range of applications from virtual assistants to automated customer service systems, need to be both accurate and dependable. One promising approach to achieving this is through the application of game theory—a branch of mathematics that studies strategic interactions among rational agents. This blog post will explore how game theory can be utilized to enhance the reliability of language models, providing a detailed technical and practical explanation of the concepts involved.

Understanding Game Theory

Game theory is a mathematical framework designed to analyze the interactions between different decision-makers, known as players. It focuses on the strategies that these players employ to achieve their objectives, often in situations where the outcome depends on the actions of all participants. The key components of game theory include:

- Players: The decision-makers in the game.

- Strategies: The plans of action that players can choose.

- Payoffs: The rewards or penalties that players receive based on the outcome of the game.

- Equilibrium: A stable state where no player can benefit by changing their strategy unilaterally.

Game theory has been applied in various fields, including economics, political science, and biology, to model competitive and cooperative behaviors. In AI, it offers a structured way to analyze and design interactions between intelligent agents. Lets explore a bit more in detail how game theory can be leveraged in developing LLMs.

Detailed Example: Applying Game Theory to Language Model Reliability

Scenario: Adversarial Training in Language Models

Background

Imagine we are developing a language model intended to generate human-like text for customer support chatbots. The challenge is to ensure that the responses generated are not only coherent and contextually appropriate but also resistant to manipulation or adversarial inputs.

Game Theory Framework

To improve the reliability of our language model, we can frame the problem using game theory. We define two players in this game:

- Generator (G): The language model that generates text.

- Adversary (A): An adversarial model that tries to find flaws, biases, or vulnerabilities in the generated text.

This setup forms a zero-sum game where the generator aims to produce flawless text (maximize quality), while the adversary aims to expose weaknesses (minimize quality).

Adversarial Training Process

- Initialization:

- Generator (G): Initialized to produce text based on training data (e.g., customer service transcripts).

- Adversary (A): Initialized with the ability to analyze and critique text, identifying potential weaknesses (e.g., incoherence, inappropriate responses).

- Iteration Process:

- Step 1: Text Generation: The generator produces a batch of text samples based on given inputs (e.g., customer queries).

- Step 2: Adversarial Analysis: The adversary analyzes these text samples and identifies weaknesses. It may use techniques such as:

- Text perturbation: Introducing small changes to the input to see if the output becomes nonsensical.

- Contextual checks: Ensuring that the generated response is relevant to the context of the query.

- Bias detection: Checking for biased or inappropriate content in the response.

- Step 3: Feedback Loop: The adversary provides feedback to the generator, highlighting areas of improvement.

- Step 4: Generator Update: The generator uses this feedback to adjust its parameters, improving its ability to produce high-quality text.

- Convergence:

- This iterative process continues until the generator reaches a point where the adversary finds it increasingly difficult to identify flaws. At this stage, the generator’s responses are considered reliable and robust.

Technical Details

- Generator Model: Typically, a Transformer-based model like GPT (Generative Pre-trained Transformer) is used. It is fine-tuned on specific datasets related to customer service.

- Adversary Model: Can be a rule-based system or another neural network designed to critique text. It uses metrics such as perplexity, semantic similarity, and sentiment analysis to evaluate the text.

- Objective Function: The generator’s objective is to minimize a loss function that incorporates both traditional language modeling loss (e.g., cross-entropy) and adversarial feedback. The adversary’s objective is to maximize this loss, highlighting the generator’s weaknesses.

Example in Practice

Customer Query: “I need help with my account password.”

Generator’s Initial Response: “Sure, please provide your account number.”

Adversary’s Analysis:

- Text Perturbation: Changes “account password” to “account passwrd” to see if the generator still understands the query.

- Contextual Check: Ensures the response is relevant to password issues.

- Bias Detection: Checks for any inappropriate or biased language.

Adversary’s Feedback:

- The generator failed to recognize the misspelled word “passwrd” and produced a generic response.

- The response did not offer immediate solutions to password-related issues.

Generator Update:

- The generator’s training is adjusted to better handle common misspellings.

- Additional training data focusing on password-related queries is used to improve contextual understanding.

Improved Generator Response: “Sure, please provide your account number so I can assist with resetting your password.”

Outcome:

- The generator’s response is now more robust to input variations and contextually appropriate, thanks to the adversarial training loop.

This example illustrates how game theory, particularly the adversarial training framework, can significantly enhance the reliability of language models. By treating the interaction between the generator and the adversary as a strategic game, we can iteratively improve the model’s robustness and accuracy. This approach ensures that the language model not only generates high-quality text but is also resilient to manipulations and contextual variations, thereby enhancing its practical utility in real-world applications.

The Relevance of Game Theory in AI Development

The integration of game theory into AI development provides several advantages:

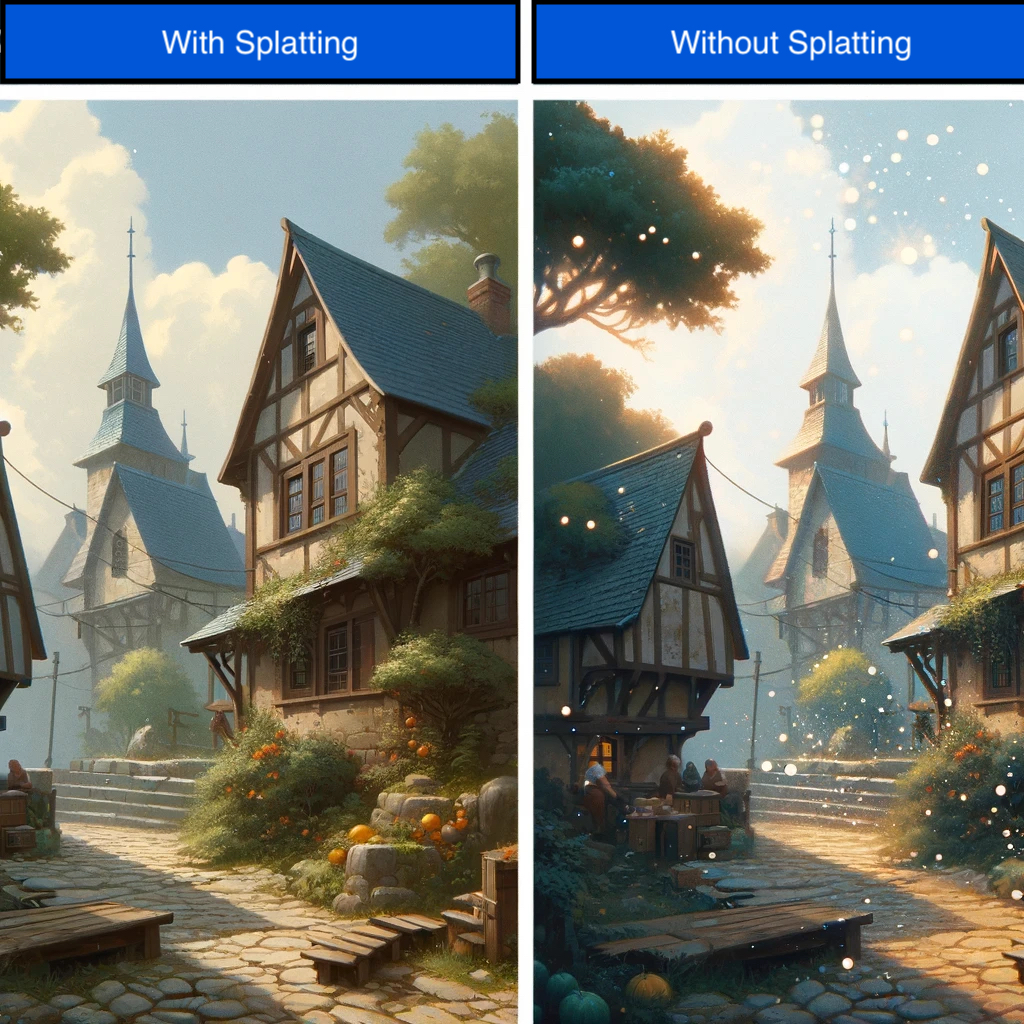

- Strategic Decision-Making: Game theory helps AI systems make decisions that consider the actions and reactions of other agents, leading to more robust and adaptive behaviors.

- Optimization of Interactions: By modeling interactions as games, AI developers can optimize the strategies of their models to achieve better outcomes.

- Conflict Resolution: Game theory provides tools for resolving conflicts and finding equilibria in multi-agent systems, which is crucial for cooperative AI scenarios.

- Robustness and Reliability: Analyzing AI behavior through the lens of game theory can identify vulnerabilities and improve the overall reliability of language models.

Applying Game Theory to Language Models

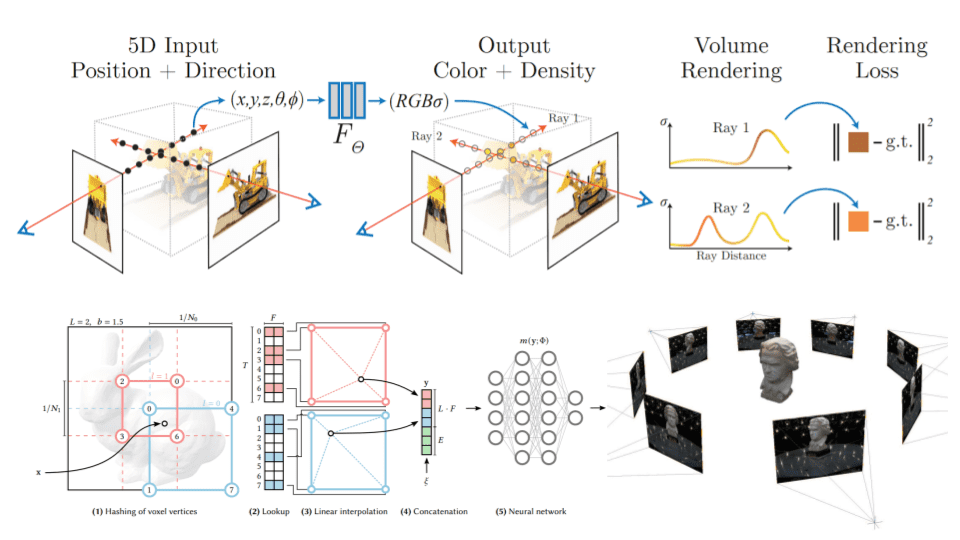

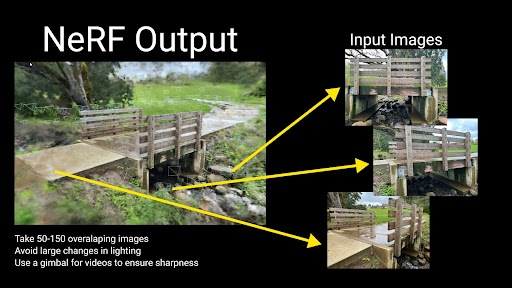

Adversarial Training

One practical application of game theory in improving language models is adversarial training. In this context, two models are pitted against each other: a generator and an adversary. The generator creates text, while the adversary attempts to detect flaws or inaccuracies in the generated text. This interaction can be modeled as a zero-sum game, where the generator aims to maximize its performance, and the adversary aims to minimize it.

Example: Generative Adversarial Networks (GANs) are a well-known implementation of this concept. In language models, a similar approach can be used where the generator model continuously improves by learning to produce text that the adversary finds increasingly difficult to distinguish from human-written text.

Cooperative Learning

Another approach involves cooperative game theory, where multiple agents collaborate to achieve a common goal. In the context of language models, different models or components can work together to enhance the overall system performance.

Example: Ensemble methods combine the outputs of multiple models to produce a more accurate and reliable final result. By treating each model as a player in a cooperative game, developers can optimize their interactions to improve the robustness of the language model.

Mechanism Design

Mechanism design is a branch of game theory that focuses on designing rules and incentives to achieve desired outcomes. In AI, this can be applied to create environments where language models are incentivized to produce reliable and accurate outputs.

Example: Reinforcement learning frameworks can be designed using principles from mechanism design to reward language models for generating high-quality text. By carefully structuring the reward mechanisms, developers can guide the models toward more reliable performance.

Current Applications and Future Prospects

Current Applications

- Automated Content Moderation: Platforms like social media and online forums use game-theoretic approaches to develop models that can reliably detect and manage inappropriate content. By framing the interaction between content creators and moderators as a game, these systems can optimize their strategies for better accuracy.

- Collaborative AI Systems: In customer service, multiple AI agents often need to collaborate to provide coherent and accurate responses. Game theory helps in designing the interaction protocols and optimizing the collective behavior of these agents.

- Financial Forecasting: Language models used in financial analysis can benefit from game-theoretic techniques to predict market trends more reliably. By modeling the market as a game with various players (traders, institutions, etc.), these models can improve their predictive accuracy.

Future Prospects

The future of leveraging game theory for AI advancements holds significant promise. As AI systems become more complex and integrated into various aspects of society, the need for reliable and robust models will only grow. Game theory provides a powerful toolset for addressing these challenges.

- Enhanced Multi-Agent Systems: Future AI applications will increasingly involve multiple interacting agents. Game theory will play a crucial role in designing and optimizing these interactions to ensure system reliability and effectiveness.

- Advanced Adversarial Training Techniques: Developing more sophisticated adversarial training methods will help create language models that are resilient to manipulation and capable of maintaining high performance in dynamic environments.

- Integration with Reinforcement Learning: Combining game-theoretic principles with reinforcement learning will lead to more adaptive and robust AI systems. This synergy will enable language models to learn from their interactions in more complex and realistic scenarios.

- Ethical AI Design: Game theory can contribute to the ethical design of AI systems by ensuring that they adhere to fair and transparent decision-making processes. Mechanism design, in particular, can help create incentives for ethical behavior in AI.

Conclusion

Game theory offers a rich and versatile framework for improving the reliability of language models. By incorporating strategic decision-making, optimizing interactions, and designing robust mechanisms, AI developers can create more dependable and effective systems. As AI continues to advance, the integration of game-theoretic concepts will be crucial in addressing the challenges of complexity and reliability, paving the way for more sophisticated and trustworthy AI applications.

Through adversarial training, cooperative learning, and mechanism design, the potential for game theory to enhance AI is vast. Current applications already demonstrate its value, and future developments promise even greater advancements. By embracing these ideas, we can look forward to a future where language models are not only powerful but also consistently reliable and ethically sound.