Introduction:

Today’s discussion revolves around “Human emulation” which has become a hot topic because it reframes AI from content generation to capability replication: systems that can reliably do what humans do, digitally (knowledge work) and physically (manual work), with enough autonomy to run while people sleep.

In the Elon Musk ecosystem, this idea shows up in three converging bets:

- Autonomous digital workers (agentic AI that can operate tools, applications, and workflows end-to-end).

- Autonomous mobile assets (cars that can generate revenue when the owner isn’t using them).

- Autonomous physical workers (humanoids that can perform tasks in human-built environments).

Tesla is clearly driving (2) and (3). xAI is positioning itself as a serious contender for (1) and likely as the “brain layer” that connects these domains.

Tesla’s Human Emulation Stack: Car-as-Worker and Robot-as-Worker

1) “Earn while you sleep”: the autonomous vehicle as an income-producing asset

The most concrete “human emulation” narrative from Tesla is the claim that a Tesla could join a robotaxi network to generate revenue when idle, conceptually similar to Airbnb for cars. Tesla has publicly promoted the idea that a vehicle could “earn money while you’re not using it.”

On the operational side, Tesla has been running a limited robotaxi service (not yet the “no-supervision everywhere” end state). Reporting in 2025 noted Tesla’s robotaxi approach is expanding gradually and still uses safety monitoring in some form, underscoring that this is a staged rollout rather than a flip-the-switch moment.

Why this matters for “human emulation”:

A human rideshare driver monetizes time. A robotaxi monetizes asset uptime. If Tesla achieves high autonomy + acceptable insurance/regulatory frameworks + scalable operations (charging, cleaning, dispatch), then the “sleeping hours” of the owner become economically productive.

Practitioner lens: expect the first big enterprise opportunities not in consumer “passive income,” but in fleet economics (airports, hotels, logistics, managed mobility) where charging/cleaning/maintenance can be industrialized.

2) Optimus: emulating physical labor (not just movement)

Tesla’s own positioning for Optimus is explicit: a general-purpose bipedal humanoid intended for “unsafe, repetitive or boring tasks.”

Independent reporting continues to emphasize two realities at once:

- Tesla is serious about scaling Optimus and tying it to the autonomy stack.

- The industry is split on humanoid form factors; many experts argue task-specific robots outperform humanoids for most industrial work—at least for the foreseeable future.

Why this matters for “human emulation”:

The humanoid bet isn’t about novelty, it’s about compatibility with human environments (stairs, doors, tools, workstations) and the option value of “one robot, many tasks,” even if early deployments are narrow.

3) Compute is the flywheel: chips + training infrastructure

If you assume autonomy and robotics are compute-hungry, then Tesla’s investments in AI compute and custom silicon become part of the “human emulation” story. Recent reporting highlighted Tesla’s continued push toward in-house compute/AI hardware ambitions (e.g., Dojo-related efforts and new chip roadmaps).

Why this matters:

Human emulation at scale is less about one model and more about a factory of models: perception, planning, manipulation, dialogue, compliance, simulation, and continuous learning loops.

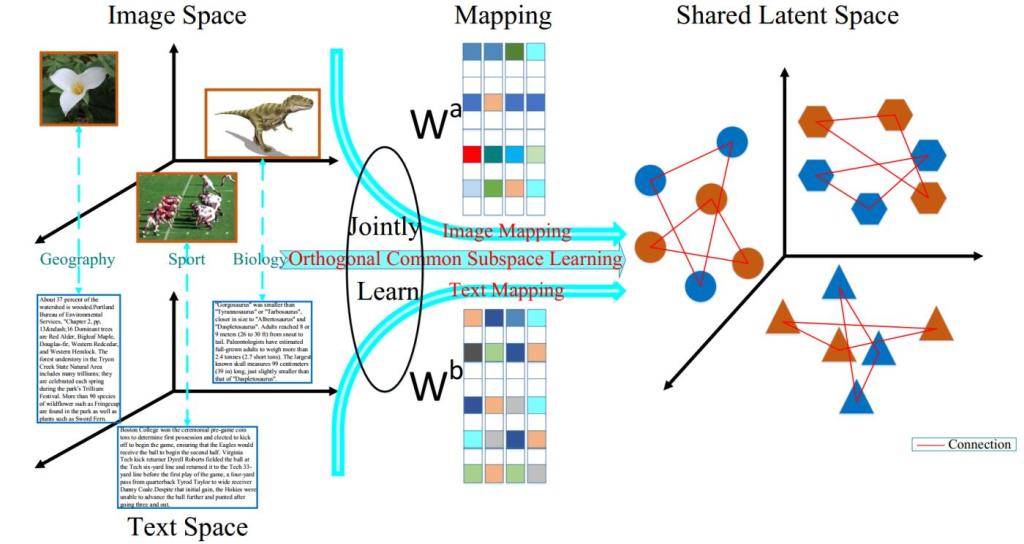

xAI’s Role: Digital Human Emulation (Agentic Work), Not Just Chat

1) Grok’s shift from “chatbot” to “agent”

xAI has been pushing into agentic capabilities, not just answering questions, but executing tasks via tools. In late 2025, xAI announced an Agent Tools API positioned explicitly to let Grok operate as an autonomous agent.

This matters because “digital human emulation” is often less about deep reasoning and more about:

- navigating enterprise systems,

- orchestrating multi-step workflows,

- using tools correctly,

- handling exceptions,

- producing auditable outcomes.

That is the core of how you replace “a person at a keyboard” with “a system at a keyboard.”

2) What xAI may be building beyond “let your Tesla do side jobs”

You asked to explore what xAI might be doing beyond leveraging Teslas for secondary jobs. Here are the plausible directions—grounded in what xAI has publicly disclosed (agent tooling) and what the market is converging on (agents as workflow executors), while being clear about where we’re extrapolating.

A) “Digital workers” that emulate office roles (high-likelihood near/mid-term)

Given xAI’s tooling direction, the near-term “human emulation” play is enterprise-grade agents that can:

- execute customer operations tasks,

- do research + analysis with sources,

- create and update tickets, CRM objects, and knowledge articles,

- coordinate with human approvers.

This aligns with the general definition of AI agents as systems that autonomously perform tasks on behalf of users.

What would differentiate xAI here?

Potentially:

- tight integration with real-time public data streams (notably X, where available),

- multi-agent collaboration patterns (planner/executor/verifier),

- lower-latency tool use for operations workflows.

B) “Embodied digital humans” for customer-facing interactions (mid-term)

There’s a parallel trend toward digital humans and embodied agents, lifelike interfaces that feel more human in conversation.

If xAI pairs high-function agents with high-presence interfaces, you get customer experiences that look and feel like “talking to a person,” while being backed by robust tool execution.

For CX leaders, the key shift is: the interface becomes humanlike, but the value is in the agent’s ability to do things, not just talk.

C) A cross-company autonomy layer (long-term, speculative but coherent)

The most ambitious “Musk ecosystem” interpretation is an autonomy platform spanning:

- digital work (xAI agents),

- mobility work (Tesla robotaxi),

- physical work (Optimus).

That would create an internal advantage: shared training approaches, shared safety tooling, shared simulation, and (critically) shared distribution.

Nothing public proves a unified roadmap across all entities—so treat this as a strategic pattern rather than a confirmed plan. What is public is Tesla’s emphasis on autonomy/robotics scale and xAI’s emphasis on agentic execution.

Near-, Mid-, and Long-Term Vision (A Practitioner’s Map)

Near term (0–24 months): “Humans-in-the-loop at scale”

What you’ll likely see:

- Agentic systems that complete tasks but still require approvals for sensitive actions (refunds, cancellations, policy exceptions).

- Robotaxi expansion remains geographically constrained and operationally monitored in meaningful ways (safety, regulation, insurance).

- Early Optimus deployments remain limited, structured, and heavily operationalized.

Winning moves for practitioners:

- Build workflow-native agent deployments (CRM, ITSM, ERP), not “chat next to the workflow.”

- Invest in process instrumentation (event logs, exception taxonomies, policy rules) so agents can act safely.

- Define human-emulation KPIs: completion rate, exception rate, time-to-resolution, cost per outcome, audit pass rate.

Mid term (2–5 years): “Autonomy becomes a platform, not a feature”

What you’ll likely see:

- Multi-agent operations (planner + doer + verifier) becomes standard.

- Digital labor begins to reshape operating models: fewer handoffs, more straight-through processing.

- In mobility, if Tesla’s robotaxi scales, ecosystems emerge for fleet ops (cleaning, charging, remote assist, insurance products, municipal partnerships).

Winning moves for practitioners:

- Treat agents as a new workforce category: onboarding, role design, permissions, QA, drift monitoring, and continuous improvement.

- Implement policy-as-code for agent actions (what it may do, with what evidence, with what approvals).

- Modernize your knowledge architecture: retrieval is necessary but insufficient—agents need transactional authority with guardrails.

Long term (5–10+ years): “Economic structure changes around machine labor”

What you’ll likely see:

- A meaningful portion of “routine knowledge work” becomes machine-executed.

- Physical automation (humanoids and non-humanoids) expands, but unevenly task suitability and ROI will dominate.

- Regulatory and societal pressure increases around accountability, job transitions, and safety.

Winning moves for practitioners:

- Build trust infrastructure: audit trails, model-risk management, incident response, and transparent customer disclosures.

- Redesign experiences assuming “the worker is software” (24/7 service, instant fulfillment) while keeping human escalation excellent.

- Prepare for brand risk: “human emulation” failures are reputationally louder than ordinary software bugs.

Societal Impact: The Second-Order Effects Leaders Underestimate

- Labor shifts from time to orchestration

The scarce skill becomes not “doing tasks,” but designing systems that do tasks safely. - The accountability gap becomes the battleground

When an agent acts, who is responsible; vendor, operator, enterprise, user? This is where governance becomes a competitive advantage. - New inequality vectors appear

If asset ownership (cars, robots, compute) drives income, then autonomy can amplify returns to capital faster than returns to labor. - Customer expectations reset

Once autonomous systems deliver instant, 24/7 outcomes, customers will view “business hours” and “wait 3–5 days” as broken experiences.

What a Practitioner Should Be Aware Of (and How to Get in Front)

The big risks to plan for

- Operational reality risk: “autonomous” still requires edge-case handling, maintenance, and exception operations (digital and physical).

- Governance risk: without tight permissions and auditability, agents create compliance exposure.

- Model drift & policy drift: the system remains “correct” only if data, policies, and monitoring stay aligned.

Practical steps to get ahead (starting now)

- Pick 3 workflows where a digital human already exists

Meaning: a person follows a repeatable playbook across systems (refunds, order changes, ticket triage, appointment rescheduling). - Decompose into “decision + action”

- Decisions: classify, approve, prioritize.

- Actions: update systems, send comms, execute transactions.

- Build an “agent runway”

- Tool access model (least privilege)

- Approval tiers (auto / sampled / always-human)

- Evidence logging (why the agent did it)

- Continuous evaluation (golden sets + live monitoring)

- Create an autonomy roadmap with three lanes

- Assistive (draft, suggest, summarize)

- Transactional (execute with guardrails)

- Autonomous (execute + self-correct + escalate)

- For mobility/robotics: partner early, but operationalize hard

If you’re exploring “vehicle-as-worker” economics, treat it like launching a micro-logistics business: charging, cleaning, incident response, insurance, and municipal constraints will dominate outcomes before the AI does.

Bottom Line

Tesla is pursuing human emulation in the physical world (Optimus) and human-emulation economics in mobility (robotaxi-as-income).

xAI is laying groundwork for human emulation in digital work via agentic tooling that can execute tasks, not just respond.

If you want to get in front of this, don’t start with “Which model?” Start with: Which outcomes will you allow a machine to own end-to-end, under what controls, with what proof?

Please join us on (Spotify) as we discuss this and other topics in the AI space.