Introduction: What Is Agentic AI?

Agentic AI refers to a class of artificial intelligence systems designed to act autonomously toward achieving specific goals with minimal human intervention. Unlike traditional AI systems that react based on fixed rules or narrow task-specific capabilities, Agentic AI exhibits intentionality, adaptability, and planning behavior. These systems are increasingly capable of perceiving their environment, making decisions in real time, and executing sequences of actions over extended periods—often while learning from the outcomes to improve future performance.

At its core, Agentic AI transforms AI from a passive, tool-based role to an active, goal-oriented agent—capable of dynamically navigating real-world constraints to accomplish objectives. It mirrors how human agents operate: setting goals, evaluating options, adapting strategies, and pursuing long-term outcomes.

Historical Context and Evolution

The idea of agent-like machines dates back to early AI research in the 1950s and 1960s with concepts like symbolic reasoning, utility-based agents, and deliberative planning systems. However, these early systems lacked robustness and adaptability in dynamic, real-world environments.

Significant milestones in Agentic AI progression include:

- 1980s–1990s: Emergence of multi-agent systems and BDI (Belief-Desire-Intention) architectures.

- 2000s: Growth of autonomous robotics and decision-theoretic planning (e.g., Mars rovers).

- 2010s: Deep reinforcement learning (DeepMind’s AlphaGo) introduced self-learning agents.

- 2020s–Today: Foundation models (e.g., GPT-4, Claude, Gemini) gain capabilities in multi-turn reasoning, planning, and self-reflection—paving the way for Agentic LLM-based systems like Auto-GPT, BabyAGI, and Devin (Cognition AI).

Today, we’re witnessing a shift toward composite agents—Agentic AI systems that combine perception, memory, planning, and tool-use, forming the building blocks of synthetic knowledge workers and autonomous business operations.

Core Technologies Behind Agentic AI

Agentic AI is enabled by the convergence of several key technologies:

1. Foundation Models: The Cognitive Core of Agentic AI

Foundation models are the essential engines powering the reasoning, language understanding, and decision-making capabilities of Agentic AI systems. These models—trained on massive corpora of text, code, and increasingly multimodal data—are designed to generalize across a wide range of tasks without the need for task-specific fine-tuning.

They don’t just perform classification or pattern recognition—they reason, infer, plan, and generate. This shift makes them uniquely suited to serve as the cognitive backbone of agentic architectures.

What Defines a Foundation Model?

A foundation model is typically:

- Large-scale: Hundreds of billions of parameters, trained on trillions of tokens.

- Pretrained: Uses unsupervised or self-supervised learning on diverse internet-scale datasets.

- General-purpose: Adaptable across domains (finance, healthcare, legal, customer service).

- Multi-task: Can perform summarization, translation, reasoning, coding, classification, and Q&A without explicit retraining.

- Multimodal (increasingly): Supports text, image, audio, and video inputs (e.g., GPT-4o, Gemini 1.5, Claude 3 Opus).

This versatility is why foundation models are being abstracted as AI operating systems—flexible intelligence layers ready to be orchestrated in workflows, embedded in products, or deployed as autonomous agents.

Leading Foundation Models Powering Agentic AI

| Model | Developer | Strengths for Agentic AI |

|---|---|---|

| GPT-4 / GPT-4o | OpenAI | Strong reasoning, tool use, function calling, long context |

| Claude 3 Opus | Anthropic | Constitutional AI, safe decision-making, robust memory |

| Gemini 1.5 Pro | Google DeepMind | Native multimodal input, real-time tool orchestration |

| Mistral Mixtral | Mistral AI | Lightweight, open-source, composability |

| LLaMA 3 | Meta AI | Private deployment, edge AI, open fine-tuning |

| Command R+ | Cohere | Optimized for RAG + retrieval-heavy enterprise tasks |

These models serve as reasoning agents—when embedded into a larger agentic stack, they enable perception (input understanding), cognition (goal setting and reasoning), and execution (action selection via tool use).

Foundation Models in Agentic Architectures

Agentic AI systems typically wrap a foundation model inside a reasoning loop, such as:

- ReAct (Reason + Act + Observe)

- Plan-Execute (used in AutoGPT/CrewAI)

- Tree of Thought / Graph of Thought (branching logic exploration)

- Chain of Thought Prompting (decomposing complex problems step-by-step)

In these loops, the foundation model:

- Processes high-context inputs (task, memory, user history).

- Decomposes goals into sub-tasks or plans.

- Selects and calls tools or APIs to gather information or act.

- Reflects on results and adapts next steps iteratively.

This makes the model not just a chatbot, but a cognitive planner and execution coordinator.

What Makes Foundation Models Enterprise-Ready?

For organizations evaluating Agentic AI deployments, the maturity of the foundation model is critical. Key capabilities include:

- Function Calling APIs: Securely invoke tools or backend systems (e.g., OpenAI’s function calling or Anthropic’s tool use interface).

- Extended Context Windows: Retain memory over long prompts and documents (up to 1M+ tokens in Gemini 1.5).

- Fine-Tuning and RAG Compatibility: Adapt behavior or ground answers in private knowledge.

- Safety and Governance Layers: Constitutional AI (Claude), moderation APIs (OpenAI), and embedding filters (Google) help ensure reliability.

- Customizability: Open-source models allow enterprise-specific tuning and on-premise deployment.

Strategic Value for Businesses

Foundation models are the platforms on which Agentic AI capabilities are built. Their availability through API (SaaS), private LLMs, or hybrid edge-cloud deployment allows businesses to:

- Rapidly build autonomous knowledge workers.

- Inject AI into existing SaaS platforms via co-pilots or plug-ins.

- Construct AI-native processes where the reasoning layer lives between the user and the workflow.

- Orchestrate multi-agent systems using one or more foundation models as specialized roles (e.g., analyst agent, QA agent, decision validator).

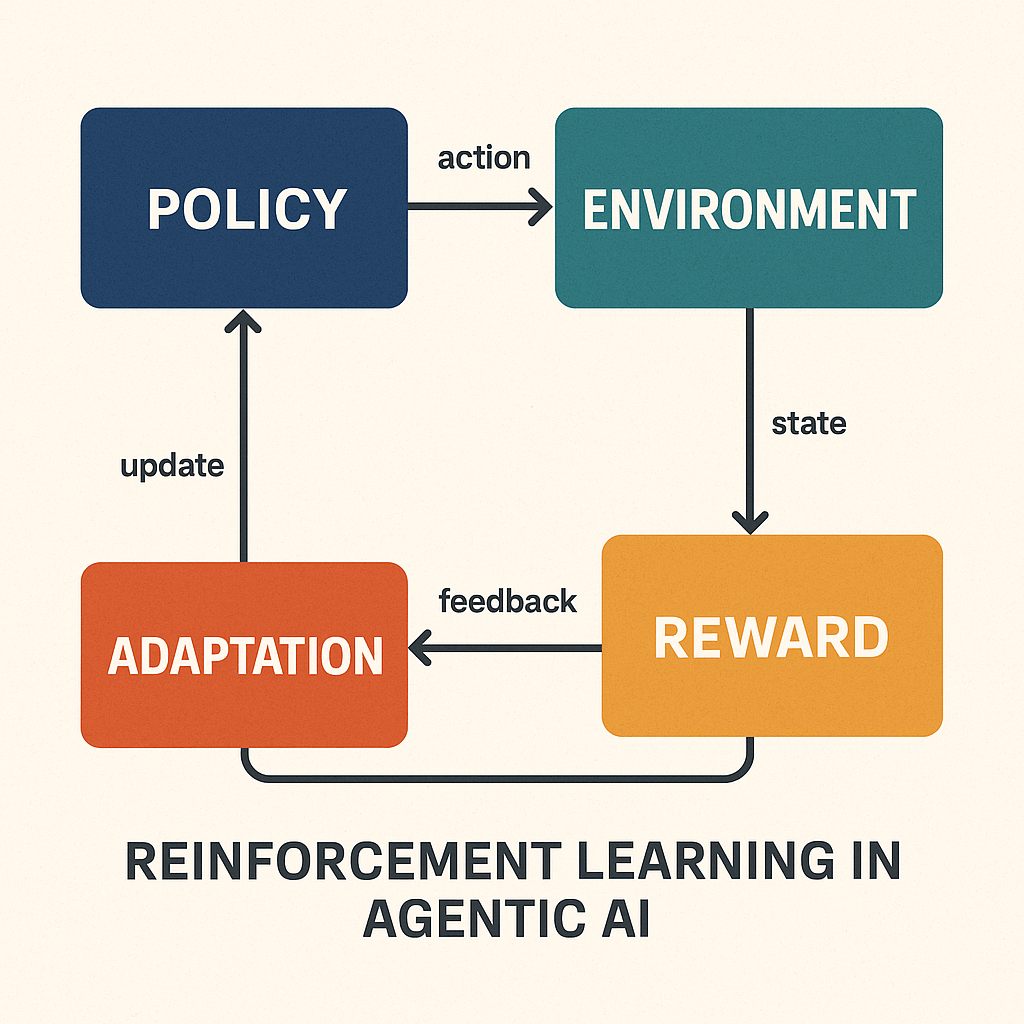

2. Reinforcement Learning: Enabling Goal-Directed Behavior in Agentic AI

Reinforcement Learning (RL) is a core component of Agentic AI, enabling systems to make sequential decisions based on outcomes, adapt over time, and learn strategies that maximize cumulative rewards—not just single-step accuracy.

In traditional machine learning, models are trained on labeled data. In RL, agents learn through interaction—by trial and error—receiving rewards or penalties based on the consequences of their actions within an environment. This makes RL particularly suited for dynamic, multi-step tasks where success isn’t immediately obvious.

Why RL Matters in Agentic AI

Agentic AI systems aren’t just responding to static queries—they are:

- Planning long-term sequences of actions

- Making context-aware trade-offs

- Optimizing for outcomes (not just responses)

- Adapting strategies based on experience

Reinforcement learning provides the feedback loop necessary for this kind of autonomy. It’s what allows Agentic AI to exhibit behavior resembling initiative, foresight, and real-time decision optimization.

Core Concepts in RL and Deep RL

| Concept | Description |

|---|---|

| Agent | The decision-maker (e.g., an AI assistant or robotic arm) |

| Environment | The system it interacts with (e.g., CRM system, warehouse, user interface) |

| Action | A choice or move made by the agent (e.g., send an email, move a robotic arm) |

| Reward | Feedback signal (e.g., successful booking, faster resolution, customer rating) |

| Policy | The strategy the agent learns to map states to actions |

| State | The current situation of the agent in the environment |

| Value Function | Expected cumulative reward from a given state or state-action pair |

Deep Reinforcement Learning (DRL) incorporates neural networks to approximate value functions and policies, allowing agents to learn in high-dimensional and continuous environments (like language, vision, or complex digital workflows).

Popular Algorithms and Architectures

| Type | Examples | Used For |

|---|---|---|

| Model-Free RL | Q-learning, PPO, DQN | No internal model of environment; trial-and-error focus |

| Model-Based RL | MuZero, Dreamer | Learns a predictive model of the environment |

| Multi-Agent RL | MADDPG, QMIX | Coordinated agents in distributed environments |

| Hierarchical RL | Options Framework, FeUdal Networks | High-level task planning over low-level controllers |

| RLHF (Human Feedback) | Used in GPT-4 and Claude | Aligning agents with human values and preferences |

Real-World Enterprise Applications of RL in Agentic AI

| Use Case | RL Contribution |

|---|---|

| Autonomous Customer Support Agent | Learns which actions (FAQs, transfers, escalations) optimize resolution & NPS |

| AI Supply Chain Coordinator | Continuously adapts order timing and vendor choice to optimize delivery speed |

| Sales Engagement Agent | Tests and learns optimal outreach timing, channel, and script per persona |

| AI Process Orchestrator | Improves process efficiency through dynamic tool selection and task routing |

| DevOps Remediation Agent | Learns to reduce incident impact and time-to-recovery through adaptive actions |

RL + Foundation Models = Emergent Agentic Capabilities

Traditionally, RL was used in discrete control problems (e.g., games or robotics). But its integration with large language models is powering a new class of cognitive agents:

- OpenAI’s InstructGPT / ChatGPT leveraged RLHF to fine-tune dialogue behavior.

- Devin (by Cognition AI) may use internal RL loops to optimize task completion over time.

- Autonomous coding agents (e.g., SWE-agent, Voyager) use RL to evaluate and improve code quality as part of a long-term software development strategy.

These agents don’t just reason—they learn from success and failure, making each deployment smarter over time.

Enterprise Considerations and Strategy

When designing Agentic AI systems with RL, organizations must consider:

- Reward Engineering: Defining the right reward signals aligned with business outcomes (e.g., customer retention, reduced latency).

- Exploration vs. Exploitation: Balancing new strategies vs. leveraging known successful behaviors.

- Safety and Alignment: RL agents can “game the system” if rewards aren’t properly defined or constrained.

- Training Infrastructure: Deep RL requires simulation environments or synthetic feedback loops—often a heavy compute lift.

- Simulation Environments: Agents must train in either real-world sandboxes or virtualized process models.

3. Planning and Goal-Oriented Architectures

Frameworks such as:

- LangChain Agents

- Auto-GPT / OpenAgents

- ReAct (Reasoning + Acting)

are used to manage task decomposition, memory, and iterative refinement of actions.

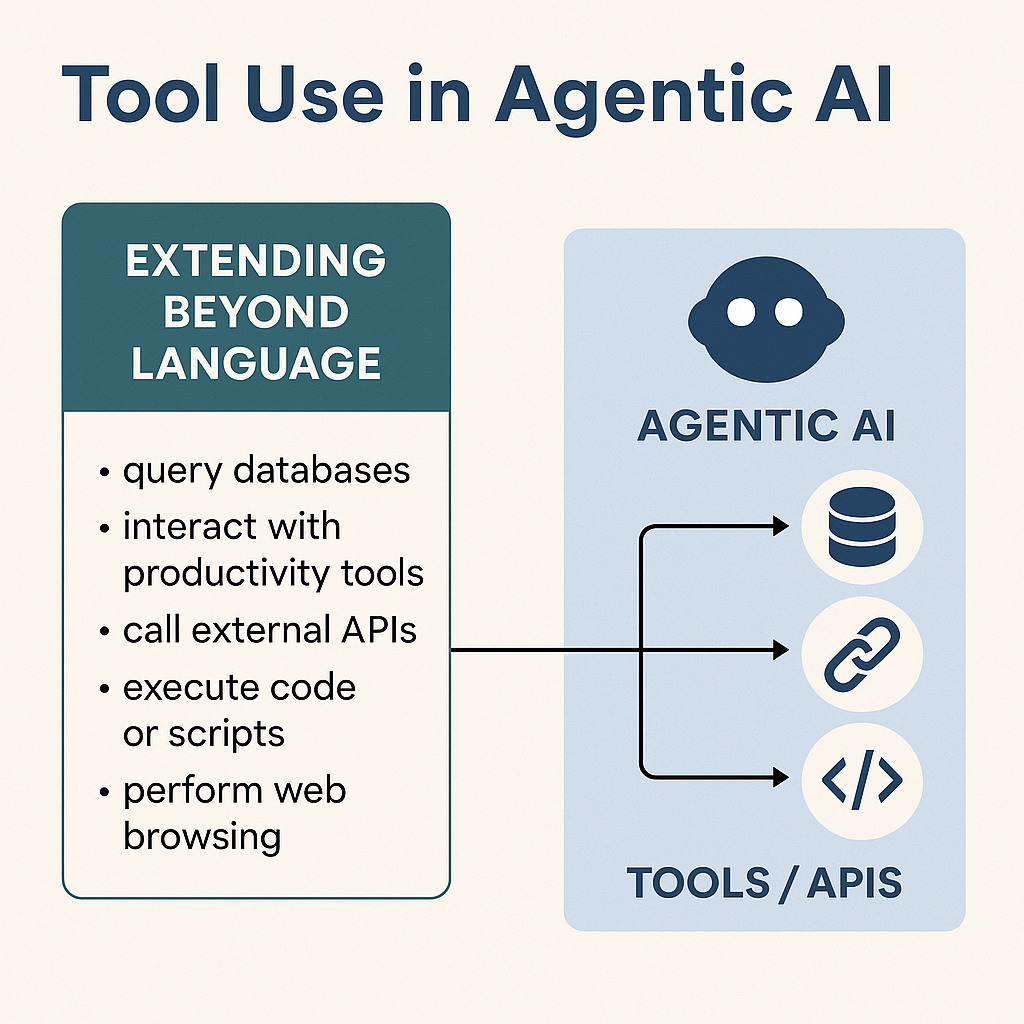

4. Tool Use and APIs: Extending the Agent’s Reach Beyond Language

One of the defining capabilities of Agentic AI is tool use—the ability to call external APIs, invoke plugins, and interact with software environments to accomplish real-world tasks. This marks the transition from “reasoning-only” models (like chatbots) to active agents that can both think and act.

What Do We Mean by Tool Use?

In practice, this means the AI agent can:

- Query databases for real-time data (e.g., sales figures, inventory levels).

- Interact with productivity tools (e.g., generate documents in Google Docs, create tickets in Jira).

- Call external APIs (e.g., weather forecasts, flight booking services, CRM platforms).

- Execute code or scripts (e.g., SQL queries, Python scripts for data analysis).

- Perform web browsing and scraping (when sandboxed or allowed) for competitive intelligence or customer research.

This ability unlocks a vast universe of tasks that require integration across business systems—a necessity in real-world operations.

How Is It Implemented?

Tool use in Agentic AI is typically enabled through the following mechanisms:

- Function Calling in LLMs: Models like OpenAI’s GPT-4o or Claude 3 can call predefined functions by name with structured inputs and outputs. This is deterministic and safe for enterprise use.

- LangChain & Semantic Kernel Agents: These frameworks allow developers to define “tools” as reusable, typed Python functions, which are exposed to the agent as callable resources. The agent reasons over which tool to use at each step.

- OpenAI Plugins / ChatGPT Actions: Predefined, secure tool APIs that extend the model’s environment (e.g., browsing, code interpreter, third-party services like Slack or Notion).

- Custom Toolchains: Enterprises can design private toolchains using REST APIs, gRPC endpoints, or even RPA bots. These are registered into the agent’s action space and governed by policies.

- Tool Selection Logic: Often governed by ReAct (Reasoning + Acting) or Plan-Execute architecture, where the agent:

- Plans the next subtask.

- Selects the appropriate tool.

- Executes and observes the result.

- Iterates or escalates as needed.

Examples of Agentic Tool Use in Practice

| Business Function | Agentic Tooling Example |

|---|---|

| Finance | AI agent generates financial summaries by calling ERP APIs (SAP/Oracle) |

| Sales | AI updates CRM entries in HubSpot, triggers lead follow-ups via email |

| HR | Agent schedules interviews via Google Calendar API + Zoom SDK |

| Product Development | Agent creates GitHub issues, links PRs, and comments in dev team Slack |

| Procurement | Agent scans vendor quotes, scores RFPs, and pushes results into Tableau |

Why It Matters

Tool use is the engine behind operational value. Without it, agents are limited to sandboxed environments—answering questions but never executing actions. Once equipped with APIs and tool orchestration, Agentic AI becomes an actor, capable of driving workflows end-to-end.

In a business context, this creates compound automation—where AI agents chain multiple systems together to execute entire business processes (e.g., “Generate monthly sales dashboard → Email to VPs → Create follow-up action items”).

This also sets the foundation for multi-agent collaboration, where different agents specialize (e.g., Finance Agent, Data Agent, Ops Agent) but communicate through APIs to coordinate complex initiatives autonomously.

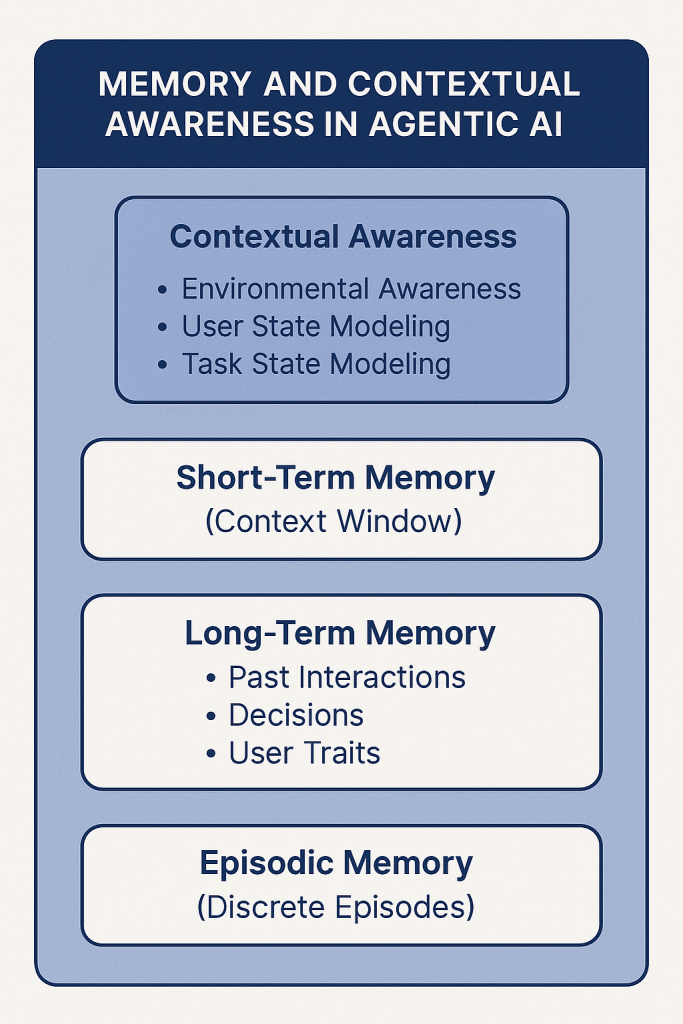

5. Memory and Contextual Awareness: Building Continuity in Agentic Intelligence

One of the most transformative capabilities of Agentic AI is memory—the ability to retain, recall, and use past interactions, observations, or decisions across time. Unlike stateless models that treat each prompt in isolation, Agentic systems leverage memory and context to operate over extended time horizons, adapt strategies based on historical insight, and personalize their behaviors for users or tasks.

Why Memory Matters

Memory transforms an agent from a task executor to a strategic operator. With memory, an agent can:

- Track multi-turn conversations or workflows over hours, days, or weeks.

- Retain facts about users, preferences, and previous interactions.

- Learn from success/failure to improve performance autonomously.

- Handle task interruptions and resumptions without starting over.

This is foundational for any Agentic AI system supporting:

- Personalized knowledge work (e.g., AI analysts, advisors)

- Collaborative teamwork (e.g., PM or customer-facing agents)

- Long-running autonomous processes (e.g., contract lifecycle management, ongoing monitoring)

Types of Memory in Agentic AI Systems

Agentic AI generally uses a layered memory architecture that includes:

1. Short-Term Memory (Context Window)

This refers to the model’s native attention span. For GPT-4o and Claude 3, this can be 128k tokens or more. It allows the agent to reason over detailed sequences (e.g., a 100-page report) in a single pass.

- Strength: Real-time recall within a conversation.

- Limitation: Forgetful across sessions without persistence.

2. Long-Term Memory (Persistent Storage)

Stores structured information about past interactions, decisions, user traits, and task states across sessions. This memory is typically retrieved dynamically when needed.

- Implemented via:

- Vector databases (e.g., Pinecone, Weaviate, FAISS) to store semantic embeddings.

- Knowledge graphs or structured logs for relationship mapping.

- Event logging systems (e.g., Redis, S3-based memory stores).

- Use Case Examples:

- Remembering project milestones and decisions made over a 6-week sprint.

- Retaining user-specific CRM insights across customer service interactions.

- Building a working knowledge base from daily interactions and tool outputs.

3. Episodic Memory

Captures discrete sessions or task executions as “episodes” that can be recalled as needed. For example, “What happened the last time I ran this analysis?” or “Summarize the last three weekly standups.”

- Often linked to LLMs using metadata tags and timestamped retrieval.

Contextual Awareness Beyond Memory

Memory enables continuity, but contextual awareness makes the agent situationally intelligent. This includes:

- Environmental Awareness: Real-time input from sensors, applications, or logs. E.g., current stock prices, team availability in Slack, CRM changes.

- User State Modeling: Knowing who the user is, what role they’re playing, their intent, and preferred interaction style.

- Task State Modeling: Understanding where the agent is within a multi-step goal, what has been completed, and what remains.

Together, memory and context awareness create the conditions for agents to behave with intentionality and responsiveness, much like human assistants or operators.

Key Technologies Enabling Memory in Agentic AI

| Capability | Enabling Technology |

|---|---|

| Semantic Recall | Embeddings + Vector DBs (e.g., OpenAI + Pinecone) |

| Structured Memory Stores | Redis, PostgreSQL, JSON-encoded long-term logs |

| Retrieval-Augmented Generation (RAG) | Hybrid search + generation for factual grounding |

| Event and Interaction Logs | Custom metadata logging + time-series session data |

| Memory Orchestration | LangChain Memory, Semantic Kernel Memory, AutoGen, CrewAI |

Enterprise Implications

For clients exploring Agentic AI, the ability to retain knowledge over time means:

- Greater personalization in customer engagement (e.g., remembering preferences, sentiment, outcomes).

- Enhanced collaboration with human teams (e.g., persistent memory of project context, task ownership).

- Improved autonomy as agents can pause/resume tasks, learn from outcomes, and evolve over time.

This unlocks AI as a true cognitive partner, not just an assistant.

Pros and Cons of Deploying Agentic AI

✅ Pros

- Autonomy & Efficiency: Reduces human supervision by handling multi-step tasks, improving throughput.

- Adaptability: Adjusts strategies in real time based on changes in context or inputs.

- Scalability: One Agentic AI system can simultaneously manage multiple tasks, users, or environments.

- Workforce Augmentation: Enables synthetic digital employees for knowledge work (e.g., AI project managers, analysts, engineers).

- Cost Savings: Reduces repetitive labor, increases automation ROI in both white-collar and blue-collar workflows.

❌ Cons

- Interpretability Challenges: Multi-step reasoning is often opaque, making debugging difficult.

- Failure Modes: Agents can take undesirable or unsafe actions if not constrained by strong guardrails.

- Integration Complexity: Requires orchestration between APIs, memory modules, and task logic.

- Security and Alignment: Risk of goal misalignment, data leakage, or unintended consequences without proper design.

- Ethical Concerns: Job displacement, over-dependence on automated decision-making, and transparency issues.

Agentic AI Use Cases and High-ROI Deployment Areas

Clients looking for immediate wins should focus on use cases that require repetitive decision-making, high coordination, or multi-tool integration.

📈 Quick Wins (0–3 Months ROI)

- Autonomous Report Generation

- Agent pulls data from BI tools (Tableau, Power BI), interprets it, drafts insights, and sends out weekly reports.

- Tools: LangChain + GPT-4 + REST APIs

- Customer Service Automation

- Replace tier-1 support with AI agents that triage tickets, resolve FAQs, and escalate complex queries.

- Tools: RAG-based agents + Zendesk APIs + Memory

- Marketing Campaign Agents

- Agents that ideate, generate, and schedule multi-channel content based on performance metrics.

- Tools: Zapier, Canva API, HubSpot, LLM + scheduler

🏗️ High ROI (3–12 Months)

- Synthetic Product Managers

- AI agents that track product feature development, gather user feedback, prioritize sprints, and coordinate with Jira/Slack.

- Ideal for startups or lean product teams.

- Autonomous DevOps Bots

- Agents that monitor infrastructure, recommend configuration changes, and execute routine CI/CD updates.

- Can reduce MTTR (mean time to resolution) and engineer fatigue.

- End-to-End Procurement Agents

- Autonomous RFP generation, vendor scoring, PO management, and follow-ups—freeing procurement officers from clerical tasks.

What Can Agentic AI Deliver for Clients Today?

Your clients can expect the following from a well-designed Agentic AI system:

| Capability | Description |

|---|---|

| Goal-Oriented Execution | Automates tasks with minimal supervision |

| Adaptive Decision-Making | Adjusts behavior in response to context and outcomes |

| Tool Orchestration | Interacts with APIs, databases, SaaS apps, and more |

| Persistent Memory | Remembers prior actions, users, preferences, and histories |

| Self-Improvement | Learns from success/failure using logs or reward functions |

| Human-in-the-Loop (HiTL) | Allows optional oversight, approvals, or constraints |

Closing Thoughts: From Assistants to Autonomous Agents

Agentic AI represents a major evolution from passive assistants to dynamic problem-solvers. For business leaders, this means a new frontier of automation—one where AI doesn’t just answer questions but takes action.

Success in deploying Agentic AI isn’t just about plugging in a tool—it’s about designing intelligent systems with goals, governance, and guardrails. As foundation models continue to grow in reasoning and planning abilities, Agentic AI will be pivotal in scaling knowledge work and operations.