Introduction

Artificial Intelligence (AI) and Cognitive AI represent two landmark developments in the realm of technology, each possessing its unique characteristics and potential. While they share common roots, these two technological domains diverge significantly in terms of their functionalities and applications. Let’s explore these similarities and differences from both a technical and functional perspective, and delve into their future directions and potential roles in small to medium business strategies.

Similarities and Overlap

Before delving into the differences, let’s highlight what unites Cognitive AI and Traditional AI. Both fall under the broad umbrella of AI, which implies the application of machine-based systems to mimic human intelligence and behavior. Both types of AI use algorithms and computational models to analyze data, make predictions, solve complex problems, and execute tasks with varying levels of autonomy.

Another similarity is their reliance on Machine Learning (ML), a subset of AI that allows systems to learn from data without explicit programming. Both Cognitive and Traditional AI use ML to refine their performance over time, becoming more accurate and efficient.

Artificial Intelligence and Cognitive AI share a fundamental objective: to replicate, augment, or even transcend human abilities in specific contexts. Both fields leverage advanced algorithms, machine learning techniques, and immense volumes of data to train systems capable of performing tasks traditionally requiring human intelligence. However, the degree to which they seek to emulate human cognition and the complexity of the tasks they undertake distinguishes them.

Artificial Intelligence vs. Cognitive Intelligence

Artificial Intelligence

Just to confirm our understanding, Artificial Intelligence (AI) encompasses a broad spectrum of technologies that emulate human intelligence. These technologies can range from rule-based systems that follow pre-defined algorithms to more advanced machine learning and deep learning systems that learn from data and improve over time. The primary goal is to create systems that can solve specific problems, often in a way that surpasses human capability in terms of speed, accuracy, or scalability.

Techniques like deep learning have allowed AI to solve complex problems and run intricate models, with applications spanning various sectors, including commerce, healthcare, and digital art. For example, AI tools like GitHub’s Copilot can expedite programming by converting natural language prompts into coding suggestions. Similarly, OpenAI’s GPT-3 through the current GPT-4 can generate human-like text, aiding in writing tasks1.

Cognitive AI

Cognitive AI, on the other hand, aims to emulate human cognition, going beyond specific problem-solving to achieve a comprehensive understanding of human perception, memory, attention, language, intelligence, and consciousness. Unlike traditional AI, where a specific algorithm is designed to solve a particular problem, cognitive computing seeks a universal algorithm for the brain, capable of solving a vast array of problems2.

Cognitive AI utilizes multiple AI technologies, such as natural language processing and image recognition, to enable machines to understand and respond to human interactions more accurately. It’s less about replacing human cognition and more about augmenting human expertise with AI’s capabilities. An example is IBM’s Watson for Oncology, which helps healthcare experts investigate a variety of treatment alternatives for patients with cancer2.

Technical and Functional Differences

Cognitive AI vs Traditional AI: A Technical Perspective

Despite these shared attributes, Cognitive AI and Traditional AI are fundamentally different in their methodologies and objectives.

Traditional AI, or Narrow AI, is designed to perform specific tasks, such as speech recognition, image analysis, or natural language processing. It uses rule-based algorithms, statistical techniques, and ML to analyze structured data and produce deterministic outcomes. Traditional AI does not understand or interpret information in the way humans do; it simply processes data according to predefined rules or patterns.

On the other hand, Cognitive AI, often referred to as Artificial General Intelligence (AGI) or Strong AI, aims to mimic human cognition. It not only performs tasks but also comprehends, reasons, and learns from unstructured data like text, images, and voice. Cognitive AI uses techniques like deep learning, a subset of ML, to understand the context, sentiment, and semantics of information. Its goal is not just to process data but to understand and interpret it in a human-like way.

Cognitive AI vs Traditional AI: A Functional Perspective

The distinction between Cognitive AI and Traditional AI becomes even more pronounced when looking at their functional perspectives.

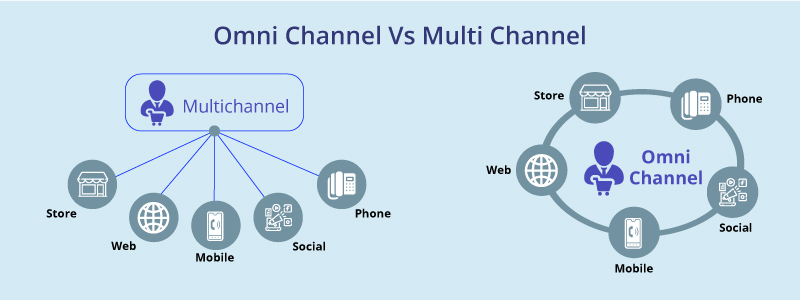

Traditional AI excels in tasks with clear-cut rules and objectives. It’s perfect for repetitive, volume-intensive tasks where speed and accuracy are crucial and where Robotic Process Automation (RPA) was once popular. In the realm of customer service, for instance, Traditional AI can power chatbots that provide instant responses to common queries.

On the other hand, Cognitive AI shines in complex scenarios that require understanding and interpretation. It can handle unstructured data and ambiguous situations, where the ‘right’ answer isn’t defined by rigid rules. In healthcare, Cognitive AI can analyze medical images, detect anomalies that might be overlooked by human eyes, and even suggest treatment options based on the patient’s medical history.

Future Directions

As AI evolves, both Cognitive and Traditional AI will continue to grow, albeit in different directions.

Traditional AI will become more efficient and specialized, with advances in algorithms and computational power enabling it to process data at unprecedented speeds. It will remain the go-to solution for tasks that require speed, accuracy, and consistency, such as fraud detection, recommendation systems, and automation of routine tasks.

Cognitive AI, meanwhile, will push the boundaries of what machines can understand and accomplish. With advancements in Natural Language Processing (NLP), neural networks, and deep learning, Cognitive AI will become more adept at understanding human language, emotions, and context. It might even achieve the elusive goal of AGI, where machines can perform any intellectual# Let’s find some recent developments in Cognitive AI and Traditional AI to provide a more updated view on the future of these technologies.

The future of AI and cognitive computing heralds a transformative era in technology, with advancements shaping a multitude of sectors, including healthcare, financial services, supply chain management, and more.

In AI, the development of tools like AlphaFold has revolutionized our understanding of protein structures, opening the door for medical researchers to develop new drugs and vaccines. AI technologies like DALL-E 2, which can generate detailed images from text descriptions, have the potential to revolutionize digital art1.

Cognitive AI, meanwhile, is expected to enable advancements in the area of augmented expertise of humans and machines working together. For example, technologies like time-series databases are now becoming popular for analyzing trends and patterns over time, while machine learning models can predict future trends. These advancements are expected to solve many of the tough problems we face in society2.

Leveraging AI and Cognitive AI in Small to Medium Business Strategies

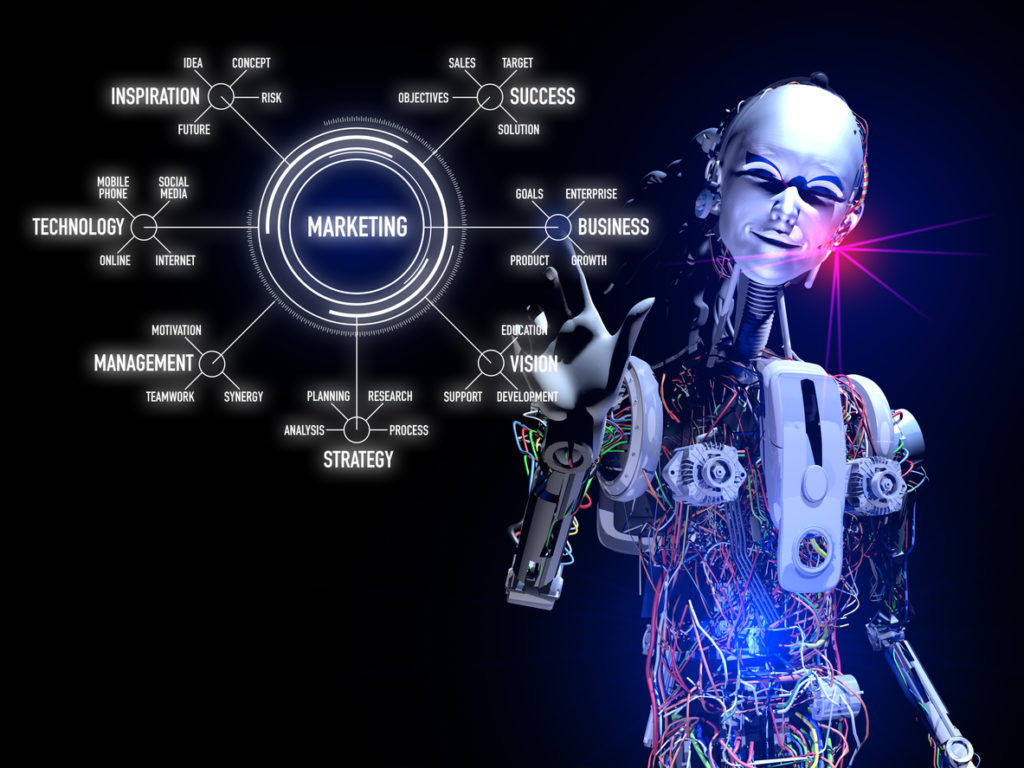

Both AI and Cognitive AI have immense potential to transform small and medium businesses (SMBs). AI technologies can automate repetitive tasks, analyze vast amounts of data for insights, and amplify the capabilities of workers. For example, AI can provide 24/7 customer support, help predict loan risks, and analyze client data for targeted marketing campaigns1.

Cognitive AI can also play a significant role in SMBs. By mimicking human cognition, it can enhance decision-making processes, improve customer interactions, and deliver personalized experiences. The ability to understand and interact in human language allows cognitive AI to deliver more intuitive and sophisticated services. For instance, customer service chatbots can understand customer queries in natural language and provide relevant responses, improving customer experience and efficiency.

In addition, cognitive AI can provide SMBs with predictive insights by analyzing historical and real-time data. This can help businesses anticipate customer needs, market trends, and potential risks, enabling them to make informed strategic decisions.

Companies that fail to adopt AI and Cognitive AI risk falling behind as these technologies become increasingly essential to maintaining a competitive edge. This is particularly true for newer companies, which have a distinct advantage in being able to invest in the latest technologies from the start1.

Conclusion

AI and Cognitive AI represent significant technological advancements with far-reaching implications for businesses of all sizes. As these technologies continue to evolve at a rapid pace, they offer immense potential to transform business operations, strategies, and outcomes. The key to leveraging these technologies lies in understanding their unique capabilities and identifying the most effective ways to integrate them into existing business processes.